Laser decapsulation is commonly used by

professional shops to rapidly remove material before finishing with a chemical etch. Upon finding out that one of my friends had purchased a laser cutting system, we decided to see how well it performed at decapping.

Infrared light is absorbed strongly by most organics as well as some other materials such as glass. Most metals, especially gold, reflect IR strongly and thus should not be significantly etched by it. Silicon is nearly transparent to IR. The hope was that this would make laser ablation highly selective for packaging material over the die, leadframe, and bond wires.

Unfortunately I don't have any in-process photos. We used a raster scan pattern at fairly low power on a CO2 laser with near-continuous duty cycle.

The first sample was a Xilinx XC9572XL CPLD in a 44-pin TQFP.

|

| Laser-etched CPLD with die outline visible |

If you look closely you can see the outline of the die and wire bonds beginning to appear. This probably has something to do with the thermal resistances of gold bonding wires vs silicon and the copper leadframe.

Two of the other three samples (other CPLDs) turned out pretty similar except the dies weren't visible because we didn't lase quite as long.

|

| Laser-etched CPLD without die visible |

I popped this one under my Olympus microscope to take a closer look.

|

| Focal plane on top of package |

|

| Focal plane at bottom of cavity |

Scan lines from the laser's raster-etch pattern were clearly visible. The laser was quite effective at removing material at first glance, however higher magnification provided reason to believe this process was not as effective as I had hoped.

|

| Raster lines in molding compound |

|

|

| Raster lines in molding compound |

Most engineers are not aware that "plastic" IC packages are actually not made of plastic. (The curious reader may find

the "epoxy" page on siliconpr0n.org a worthwhile read).

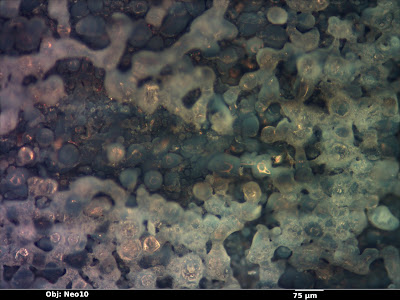

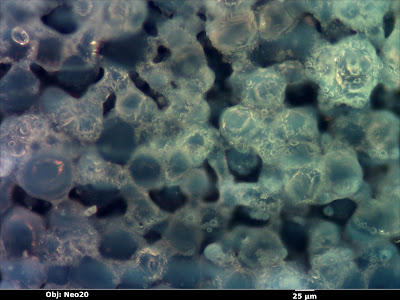

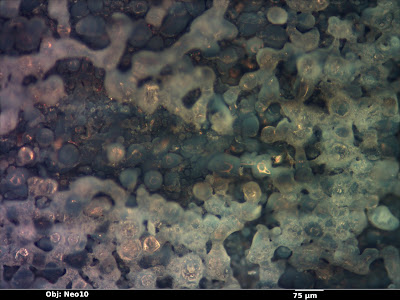

Typical "plastic" IC molding compounds are actually composite materials made from glass spheres of varying sizes as filler in a black epoxy resin matrix. The epoxy blocks light from reaching the die and interfering with circuits through induced photocurrents and acts to bond the glass together. Unfortunately the epoxy has a thermal expansion coefficient significantly different from that of the die, so glass beads are added as a filler to counteract this effect. Glass is usually a significant percentage (80 or 90 percent) of the molding compound.

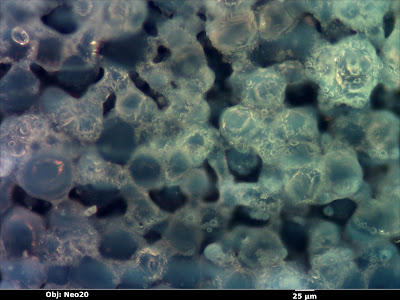

My hope was that the laser would vaporize the epoxy and glass cleanly without damaging the die or bond wires. It seems that the glass near the edge of the beam fused together, producing a mess which would be difficult or impossible to remove. This effect was even more pronounced in the first sample.

The edge of the die stood out strongly in this sample even though the die is still quite a bit below the surface. Perhaps the die (or the die-attach paddle under it) is a good thermal conductor and acted to heatsink the glass, causing it to melt rather than vaporize?

|

| The first sample seen earlier in the article, showing the corner of the die |

A closeup showed a melted, blasted mess of glass. About the only things able to easily remove this are mechanical abrasion or HF, both of which would probably destroy the die.

|

| Fused glass particles |

|

| Fused glass particles |

I then took a look at the last sample, a PIC18F4553. We had etched this one all the way down to the die just to see what would happen.

|

| Exposed PIC18F4553 die |

|

| Edge of the die showing bond pads |

Most bond wires were completely gone - it appeared that the glass had gotten so hot that it melted the wires even though they did not absorb the laser energy directly. The large reddish sphere at the center of the frame is what remains of a ball bond that did not completely vanish.

The surface of the die was also covered by fused glass. No fine structure at all was visible.

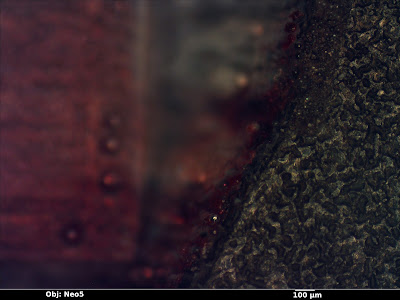

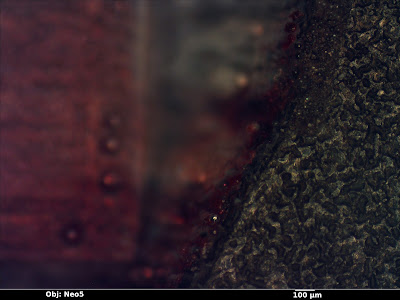

Looking at the overview photo, reddish spots were visible around the edge of the die and package. I decided to take a closer look in hopes of figuring out what was going on there.

|

| Red glass on the edge of the hole |

I was rather confused at first because there should have only been metal, glass, and plastic in that area - and none of these were red. The red areas had a glassy texture to them, suggesting that they were partly or mostly made of fused molding compound.

Some reading on stained glass provided the answer -

cranberry glass. This is a colloid of gold nanoparticles suspended in glass, giving it color from scattering incoming light.

The normal process for making cranberry glass is to mix Au2O3 in with the raw materials before smelting them together. At high temperatures the oxide decomposes, leaving gold particles suspended in the glass. It appears that I've unintentionally found a second synthesis which avoids the oxidation step: flash vaporization of solid gold and glass followed by condensation of the vapor on a cold surface.