Recent news headlines have made a big deal of Apple encrypting more of the storage on their handsets, and claiming to not have a key. Depending on who you ask this is either a huge win for privacy, or a massive blow to intelligence collection and law enforcement capabilities. I'm going to try avoiding expressing any opinions of government policy here and focus on the technical details of what is and is not possible - and why disk encryption isn't as much of a major game-changer as people seem to think.

Matthew Green at Johns Hopkins wrote a very nice article on the subject recently, but there are a few points I feel it's worth going into more detail on.

The general case here is that of two people, Alice and Bob, communicating with iPhones while a third party, Eve, attempts to discover something about their communications.

First off, the changes in iOS 8 are encrypting data on disk. Voice calls, SMS, and Internet packets still cross the carrier's network in cleartext. These companies are legally required (by CALEA in the United States, and similar laws in other countries) to provide a means for law enforcement or intelligence to access this data.

In addition, if Eve can get within radio range of Alice or Bob, she can record the conversation off the air. Although the radio links are normally encrypted, many of these cryptosystems are weak and can be defeated in a reasonable amount of time by cryptanalysis. Numerous methods are available for executing man-in-the-middle attacks between handsets and cell towers, which can further enhance Eve's interception capabilities.

Second, if Eve is able to communicate with Alice or Bob's phone directly (via Wi-Fi, SMS, MITM of the radio link, MITM further upstream on the Internet, physical access to the USB port, or using spearphishing techniques to convince them to view a suitably crafted e-mail or website) she may be able to use an 0day exploit to gain code execution on the handset and bypass any/all encryption by reading the cleartext out of RAM while the handset is unlocked. Although this does require that Eve have a staff of skilled hackers to find an 0day, or deep pockets to buy one, when dealing with a nation/state level adversary this is hardly unrealistic.

Although this does not provide Eve with the ability to exfiltrate the device encryption key (UID) directly, this is unnecessary if cleartext can be read directly. This is a case of the general trend we've been seeing for a while - encryption is no longer the weakest link, so attackers figure out ways to get around it rather than smash through.

Third, in many cases the contents of SMS/voice are not even required. If the police wish to geolocate the phone of a kidnapping victim (or a suspect) then triangulation via cell towers and the phone's GPS, using the existing e911 infrastructure, may be sufficient. If intelligence is attempting to perform contact tracing from a known target to other entities who might be of interest, then the "who called who when" metadata is of much more value than the contents of the calls.

There is only one situation where disk encryption is potentially useful: if Alice or Bob's phone falls into Eve's hands while locked and she wishes to extract information from it. In this narrow case, disk encryption does make it substantially more difficult, or even impossible, for Eve to recover the cleartext of the encrypted data.

Unfortunately for Alice and Bob, a well-equipped attacker has several options here (which may vary depending on exactly how Apple's implementation works; many of the details are not public).

If the Secure Enclave code is able to read the UID key, then it may be possible to exfiltrate the key using software-based methods. This could potentially be done by finding a vulnerability in the Secure Enclave (as was previously done with the TrustZone kernel on Qualcomm Android devices to unlock the bootloader). In addition, if Eve works for an intelligence agency, she could potentially send an NSL to Apple demanding that they write firmware, or sign an agency-provided image, to dump the UID off a handset.

In the extreme case, it might even be possible for Eve to compromise Apple's network and exfiltrate the certificate used for signing Secure Enclave images. (There is precedent for this sort of attack - the authors of Stuxnet appear to have stolen a driver-signing certificate from Realtek.)

If Apple did their job properly, however, the UID is completely inaccessible to software and is locked up in some kind of on-die hardware security module (HSM). This means that even if Eve is able to execute arbitrary code on the device while it is locked, she must bruteforce the passcode on the device itself - a very slow and time-consuming process.

In this case, an attacker may still be able to execute an invasive physical attack. By depackaging the SoC, etching or polishing down to the polysilicon layer, and looking at the surface of the die with an electron microscope the fuse bits can be located and read directly off the surface of the silicon.

previous experience that I could do it myself, with equipment available to me at school, if I had a couple of phones to destructively analyze and a few tens of thousands of dollars to spend on lab time. This is pocket change for an intelligence agency.

Once the UID is extracted, and the encrypted disk contents dumped from the flash chips, an offline bruteforce using GPUs, FPGAs, or ASICs could be used to recover the key in a fairly short time. Some very rough numbers I ran recently suggest that an 6-character upper/lowercase alphanumeric SHA-1 password could be bruteforced in around 25 milliseconds (1.2 trillion guesses per second) by a 2-rack, 2500-chip FPGA cluster costing less than $250,000. Luckily, the iPhone uses an iterated key-derivation function which is substantially slower.

The key derivation function used on the iPhone takes approximately 50 milliseconds on the iPhone's CPU, which comes out to about 70 million clock cycles. Performance studies of AES on a Cortex-A8 show about 25 cycles per byte for encryption plus 236 cycles for the key schedule. The key schedule setup only has to be done once so if the key is 32 bytes then we have 800 cycles per iteration, or about 87,500 iterations.

It's hard to give exact performance numbers for AES bruteforcing on an FPGA without building a cracker, but if pipelined to one guess per clock cycle at 400 MHz (reasonable for a modern 28nm FPGA) an attacker could easily get around 4500 guesses per second per hash pipeline. Assuming at least two pipelines per FPGA, the proposed FPGA cluster would give 22.5 million guesses per second - sufficient to break a 6-character case-sensitive alphanumeric password in around half an hour. If we limit ourselves to lowercase letters and numbers only, it would only take 45 seconds instead of the five and a half years Apple claims bruteforcing on the phone would take. Even 8-character alphanumeric case-sensitive passwords could be within reach (about eight weeks on average, or faster if the password contains predictable patterns like dictionary words).

Saturday, October 4, 2014

Wednesday, September 17, 2014

Threat modeling for FPGA software backdoors

I've been interested in the security of compilers and related toolchains ever since I first read about Ken Thompson's compiler backdoor many years ago. In a nutshell, this famous backdoor does two things:

- Whenever the backdoored C compiler compiles the "login" command, it adds a second code path that accepts a hard-coded default password in addition to the user's actual password

- Whenever the backdoored C compiler compiles the unmodified source code of itself, it adds the backdoor to the resulting binary.

Recently, there has also been a lot of concern over backdoors in integrated circuits (either added at the source code level by a malicious employee, or at the netlist/layout level by a third-party fab). DARPA even has a program dedicated to figuring out ways of eliminating or detecting such backdoors. A 2010 paper stemming from the CSAW Embedded Systems Challenge presents a detailed taxonomy of such hardware Trojans.

As far as I can tell, the majority of research into hardware Trojans has been focused on detecting them, assuming the adversary has managed to backdoor the design in some way that provides him with a tactical or strategic advantage. I have had difficulty finding detailed threat modeling research quantifying the capability of the adversary under particular scenarios.

When we turn our attention to FPGAs, things quickly become even more interesting. There are several major differences between FPGAs and ASICs from a security perspective which may grant the adversary greater or lesser capability than with an ASIC.

Attacks at the IC fab

The function of an ASIC is largely defined at fab time (except for RAM-based firmware) while FPGAs are extremely flexible. When trying to backdoor FPGA silicon the adversary has no idea what product(s) the chip will eventually make it into. They don't even know which pins on the die will be used as inputs and which as outputs.

I suspect that this places substantial bounds on the capability of an attacker "Malfab" targeting FPGA silicon at the fab (or pre-fab RTL/layout) level since the actual RTL being targeted does not even exist yet. To start, we consider a generic FPGA without any hard IP blocks:

- Malfab does not know which flipflops/SRAM/LUTs will eventually contain sensitive data, nor what format this data may take.

- Malfab does not know which I/O pins may be connected to an external communications interface useful for command-and-control.

- A detector, which searches I/Os for a magic sync sequence

- A connection from that detector to the FPGA's internal configuration access port (ICAP), used for partial reconfiguration and readback.

I am not aware of any published work on the feasibility of such a backdoor however I am skeptical that a sufficiently generic Trojan could be made simple enough to evade even casual reverse engineering of the I/O circuitry, and fast enough to not seriously cripple performance of the device.

Unfortunately for our defender Alice, modern FPGAs often contain hard IP blocks such as SERDES and RAM controllers. These present a far more attractive target to Malfab as their functionality is largely known in advance.

It is not hard to imagine, for example, a malicious patch to the RAM controller block which searches each byte group for a magic sync sequence written to consecutive addresses, then executes commands from the next few bytes. As long as Malfab is able to cause the target's system to write data of his choice to consecutive RAM addresses (perhaps by sending it as the payload of an Ethernet frame, which is then buffered in RAM) he can execute arbitrary commands on the backdoor engine. If one of these commands is "write data from SLICE_X37Y42.A5FF to RAM address 0xdeadbeef", and Malfab can predict the location of a transmit buffer of some sort, he now has the ability to exfiltrate arbitrary state from Alice's hardware.

I thus conjecture that the only feasible way to backdoor a modern FPGA at fab time is through hard IP. If we ensure that the JTAG interface (the one hard IP block whose use cannot be avoided) is not connected to attacker-controlled interfaces, use off-die SERDES, and use softcore RAM controllers on non-standard pins, it is unlikely that Malfab will be able to meaningfully affect the security of the resulting circuit.

Attacks on the toolchain

We now turn our attention to a second adversary, Maldev - the malicious development tool. Maldev works for the FPGA vendor, has compromised the source repository for their toolchain, has MITMed the download of the toolchain installer, or has penetrated Alice's network and patched the software on her computer.Since FPGAs are inherently closed systems (more so than ASICs, in which multiple competing toolchains exist), Alice has no choice but to use the FPGA vendor's binary-blob toolchain. Although it is possible in theory for a dedicated team with sufficient time and budget to reverse engineer the FPGA and/or toolchain and create a trusted open-source development suite, I discount the possibility for the scope of this section since a fully trusted toolchain is presumably free of interesting backdoors ;)

Maldev has many capabilities lacking to Malfab since he can execute arbitrary code on Alice's computer. Assuming that Alice is (justifiably) paranoid about the provenance of her FPGA software and runs it on a dedicated machine in a DMZ (so that it cannot infect the remainder of her network), this is equivalent to having full access to her RTL and netlist at all stages of design.

If Alice gives her development workstation Internet access, Maldev now has the ability to upload her RTL source and/or netlist, modify it at will on his computer, and then push patches back. This is trivially equivalent to a full defeat of the entire system.

Things become more interesting when we cut off command-and-control access. This is a realistic scenario if Alice is a military/defense user doing development on a classified network with no Internet connection.

The simplest attack is for Maldev to store a list of source file hashes and patches in the compromised binary. While this is very limited (custom-developed code cannot be attacked at all), many design teams are likely to use a small set of stock communications IP such as the Xilinx Tri-Mode Ethernet MAC, so patching these may be sufficient to provide him with an attack vector on the target system. Looking for AXI interconnect IP provides Maldev with a topology map of the target SoC.

Another option is graph-based analytics on the netlist at various stages of synthesis. For example, by looking for a 32-bit register initialized to 0x67452301, which is in a strongly connected component with three other registers initialized to 0xefcdab89, 0x98badcfe, and 0x10325476, Maldev can say with a high probability that he has found an implementation of MD5 and located the state registers. By looking for a 128-bit comparator between these values and another 128-bit value, a hash match check has been found (and a backdoor may be inserted). Similar techniques may be used to look for other cryptography.

Conclusions

If FPGA development is done using silicon purchased before the start of the project, on an air-gapped machine, and without using any pre-made IP, then some bounds can clearly be placed on the adversary's capability.I have not seen any formal threat modeling studies on this subject, although I haven't spent a ton of time looking for them due to research obligations. If anyone is aware of published work in this field I'm extremely interested.

Tuesday, August 26, 2014

Updates and pending projects

It's been a while since I've written anything here so here's a bit of a brain-dump on upcoming stuff that will find its way here eventually.

I'm now planning to make a suite of test equipment based on the Unix philosophy: do one thing and do it well. Each board will be a 3U Eurocard with a power input on the back and Ethernet + probe/signal connections on the front. They will implement the low-level signal capture/generation, buffering, and trigger logic but then leave all of the analysis and configuration UI to a PC-based application, connected over 1- or 10-gigabit Ethernet depending on the tool. Projects are listed in the approximate order that I plan to build them.

Thesis stuff

This has been eating the bulk of my time lately. I just submitted a paper to ACM Computing Surveys and am working on a conference paper for EDSC that's due in two weeks or so. With any luck the thesis itself will be finished by May and I can graduate.Lab improvements

I'm in the process of fixing up my lab to solve a bunch of the annoying things that have been bugging me. Most/all of these will be expanded into a full post once it's closer to completion.- Racking the FPGA cluster

The "raised floor" FPGA cluster was a nice idea but the 2D structure doesn't scale. I've filled almost all of it and I really need the desk space for other things.

I ordered a 3U Eurocard subrack from Digikey and once it arrives will be making laser-cut plastic shims to load all of my small boards into it. The first card made for the subrack is already inbound: a 3U x 4HP 10-port USB hub to replace several of the 4-port hubs I'm using now. It will be hosted by my Beaglebone Black, which will function as a front-end node bridging the USB-UART and USB-JTAG ports out to Ethernet.

The AC701 board is huge (well over 3U on the shortest dimension) so I may end up moving it into one of the two empty 1U Sun "pizza box" server cases I have lying around. If this happens the Atlys boards may accompany it since they won't fit comfortably in 3U either. - Ethernet - JTAG card

FTDI-based JTAG is simple and easy but the chips are pricey and to run in a networked environment you need a host PC/server. I'm in the early stages of designing an XC6SLX45 based board with a gigabit Ethernet port, IPv6 TCP offload engine, and 16 buffered, level-shifted JTAG ports. It will speak the libjtaghal jtagd protocol directly, without needing a CPU or operating system, for ultra-low latency and near zero jitter. - Logo

I've gone long enough without having a nice logo to put on my boards, enclosures, etc. At some point I should come up with one...

Test equipment

I've gradually grown fed up with current test equipment. Why would I want to fiddle with knobs and squint at a tiny 320x240 LCD when I could view the signal on my 7040x1080 quad-screen setup or, better yet, the triple 4K displays I'm going to buy when prices come down a bit? Why waste bench space on dials and buttons when I could just minimize or close the control application when it's not in use? As someone who spends most of his time sitting in front of a computer I'd much prefer a "glass cockpit" lab with few physical buttons.I'm now planning to make a suite of test equipment based on the Unix philosophy: do one thing and do it well. Each board will be a 3U Eurocard with a power input on the back and Ethernet + probe/signal connections on the front. They will implement the low-level signal capture/generation, buffering, and trigger logic but then leave all of the analysis and configuration UI to a PC-based application, connected over 1- or 10-gigabit Ethernet depending on the tool. Projects are listed in the approximate order that I plan to build them.

- 4-channel TDR for testing cat5e cable installs

This design will be based on the same general concept as a SAR ADC, with the sampling matrix transposed. Instead of gradually refining one sample before proceeding to the next, the entire waveform will be sampled once, then gradually refined over time.

Each channel of the TDR will consist of a high-speed 100-ohm differential output from a Spartan-6 FPGA to generate a pulse with very fast rise time, AC coupled into one pair of a standard RJ45 jack which will plug into the cable under test.

On the input stage, the differential signals will be subtracted by an opamp, then the single-ended differential voltage compared against a reference voltage produced by a DAC using a LMH7324SQ or similar ultra-fast comparator. The comparator will have LVDS outputs driving a differential input on the Spartan-6, which can sample DDR LVDS at up to 1 GHz. This will produce a single horizontal slice across a plot of impedance mismatch/reflection intensity vs time/distance.

By sending multiple pulses in sequence with successively increasing reference voltages from the DAC, it should be possible to reconstruct an entire TDR trace to at least 8 bits of precision for a fraction of the cost of even a single 1 GSa/s ADC.

Given the 5ns/m nominal propagation delay of cat5 cable (10us/m after round trip delay), the theoretical spatial resolution limit is 10cm although I expect noise and sampling issues to reduce usable positioning accuracy down to 20-50, and the TDR will need to be calibrated with a known length of cable from the same lot if exact propagation delays are needed to compute the precise location of a fault. - 10-channel DC power supply

Offshoot of the PDU. Ten-channel buck converter stepping 24 VDC down to an adjustable output voltage, operating frequency around 1.5 MHz. Digital feedback loop with support for soft-start, state machine based current limiting and overcurrent shutdown, etc.

More details TBD once I have time to flesh out the concept a bit. - Gigabit Ethernet protocol analyzer

Spartan-6 connected to three 1gbaseT PHYs. Packets coming in port A are sent out port B unchanged, and vice versa. All traffic going either way is buffered in some kind of RAM, then encapsulated inside a TCP stream and sent out port C to an analysis computer which can record stats, write a pcap, etc.

The capture will be raw layer-1 and include the preamble, FCS, metadata describing link state changes and autonegotiation status, and cycle-accurate timestamps. Error injection may be implemented eventually if needed. - 128-channel logic analyzer

This will be based on RED TIN, my existing FPGA-based ILA, but with more features and an external 4GB DDR3 SODIMM for buffering packet data. A 64-bit data bus at 1066 MT/s should be more than capable of pushing 32 channels at 1 GHz, 64 at 500 MHz, or 128 at 250 MHz. The input standards planned to be supported are LVCMOS from 1.5 to 3.3V, LVDS, SSTL, and possibly 5V LVTTL if the input buffer has sufficient range. I haven't looked into CML yet but may add this as well.

The FPGA board will connect to the host PC via a 10gbit Ethernet link using SFP+ direct attach cabling. Dumping 4GB (32 Gb) of data over 10gbe should take somewhere around 4 seconds after protocol overhead, or less if the capture depth is set to less than the maximum.

The FPGA board will connect via matched-impedance 100-ohm parallel cables (perhaps something like DigiKey 670-2626-ND)) to eight active probe cards. Each probe card will have a MICTOR or similar connector to the DUT providing numerous grounds, optional SSTL Vref, 16 digital inputs, and two clock/strobe inputs with optional complement inputs for differential operation. An internal DAC will allow generation of a threshold voltage for single-ended LVCMOS inputs.

The probe card input stage will consist of the following for each channel: - Unity-gain buffer to reduce capacitive load on the DUT

- Low-speed precision analog mux to select external Vref (for SSTL) or internal Vref (for LVCMOS). This threshold voltage may be shared across several/all channels in the probe card, TBD.

- High-speed LVDS-output comparator to compare single-ended inputs against the muxed Vref.

- 2:1 LVDS mux for selecting single-ended or differential inputs. Input A is the LVDS output from the comparator, input B is the buffered differential input from this and the adjacent channel. To reduce bit-to-bit skew all channels will have this mux even though it's redundant for odd-numbered channels.

|

| LA input stage for two single-ended or one differential channel |

- 4-channel DSO

This will use the same FPGA + DDR3 + 10gbe back end as the LA, but with the digital input stage replaced by an AFE and two of TI's 1.5 GSa/s dual ADCs with interleaving support.

This will give me either two channels at 3 GSa/s with a target bandwidth of 500 MHz, or four channels at 1.5 GSa/s with a target bandwidth of 250 MHz. The resulting raw data rate will be 3 GSa/s * 8 bits * 2 channels or 48 Gbps, and should comfortably fit within the capacity of a 64-bit DDR3 1066 interface.

I have no more details at this point as my mixed-signal-fu is not yet to the point that I can design a suitable AFE. This will be the last project on the list to be done due to both the cost of components and the difficulty.

Monday, March 31, 2014

Getting my feet wet with invasive attacks, part 2: The attack

This is part 2 of a 2-part series. Part 1, Target Recon, is here.

Once I knew what all of the wires in the ZIA did, the next step was to plan an attack to read signals out.

I decapped an XC2C32A with concentrated sulfuric acid and soldered it to my dev board to verify that it was alive and kicking.

After testing I desoldered the sample and brought it up to campus to introduce it to some 30 keV Ga+ ions.

I figured that all of the exposed packaging would charge, so I'd need to coat the sample with something. I normally used sputtered Pt but this is almost impossible to remove after deposition so I decided to try evaporated carbon, which can be removed nicely with oxygen plasma among other things.

I suited up for the cleanroom and met David Frey, their resident SEM/FIB expert, in front of the Zeiss 1540 FIB system. He's a former Zeiss engineer who's very protective of his "baby" and since I had never used a FIB before there was no way he was going to let me touch his, so he did all of the work while I watched. (I don't really blame him... FIB chambers are pretty cramped and it's easy to cause expensive damage by smashing into something or other. Several SEMs I've used have had one detector or another go offline for repair after a more careless user broke something.)

The first step was to mill a hole through the 900 nm or so of silicon nitride overglass using the ion beam.

Once the via was drilled and it appeared we had made contact with the signal trace, it was time to backfill with platinum. The video below is sped up 10x to avoid boring my readers ;)

Metal deposition in a FIB is basically CVD: a precursor gas is injected into the chamber near the sample and it decomposes under the influence of beam-generated secondary electrons.

Once the via was filled we put down a large (20 μm square) square pad we could hit with an electrical probe needle.

Once everything was done and the chamber was vented I removed the carbon coating with oxygen plasma (the cleanroom's standard photoresist removal process), packaged up my sample, went home, and soldered it back to the board for testing. After powering it up... nothing! The device was as dead as a doornail, I couldn't even get a JTAG IDCODE from it.

I repeated the experiment a week or two later, this time soldering bare stub wires to the pins so I could test by plugging the chip into a breadboard directly. This failed as well, but watching my benchtop power supply gave me a critical piece of information: while VCCINT was consuming the expected power (essentially zero), VCCIO was leaking by upwards of 20 mA.

This ruled out beam-induced damage as I had not been hitting any of the I/O circuitry with the ion beam. Assuming that the carbon evaporation process was safe (it's used all the time on fragile samples, so this seemed a reasonably safe assumption for the time being), this left only the plasma clean as the potential failure point.

I realized what was going on almost instantly: the antenna effect. The bond wire and leadframe connected to each pad in the device was acting as an antenna and coupling some of the 13.56 MHz RF energy from the plasma into the input buffers, blowing out the ESD diodes and input transistors, and leaving me with a dead chip.

This left me with two possible ways to proceed: removing the coating by chemical means (a strong oxidizer could work), or not coating at all. I decided to try the latter since there were less steps to go wrong.

Somewhat surprisingly, the cleanroom staff had very limited experience working with circuit edits - almost all of their FIB work was process metrology and failure analysis rather than rework, so they usually coated the samples.

I decided to get trained on RPI's other FIB, the brand-new FEI Versa 3D. It's operated by the materials science staff, who are a bit less of the "helicopter parent" type and were actually willing to give me hands-on training.

The Versa can do almost everything the older 1540 can do, in some cases better. Its one limitation is that it only has a single-channel gas injection system (platinum) while the 1540 is plumbed for platinum, tungsten, SiO2, and two gas-assisted etches.

After a training session I was ready to go in for an actual circuit edit.

The Versa is the most modern piece of equipment I've used to date: it doesn't even have the classical joystick for moving the stage around. Almost everything is controlled by the mouse, although a USB-based knob panel for adjusting magnification, focus, and stigmators is still provided for those who prefer to turn something with their fingers.

Its other nice feature is the quad-image view which lets you simultaneously view an ion beam image, an e-beam image, the IR camera inside the chamber (very helpful for not crashing your sample into a $10,000 objective lens!), and a navigation camera which displays a top-down optical view of your sample.

The nav-cam has saved me a ton of time. On RPI's older JSM-6335 FESEM, the minimum magnification is fairly high so I find myself spending several minutes moving my sample around the chamber half-blind trying to get it under the beam. With the Versa's nav-cam I'm able to set up things right the first time.

I brought up both of the beams on the aluminum sample mounting stub, then blanked them to try a new idea: Move around the sample blind, using the nav-cam only, then take single images in freeze-frame mode with one beam or the other. By reducing the total energy delivered to the sample I hoped to minimize charging.

This strategy was a complete success, I had some (not too severe) charging from the e-beam but almost no visible charging in the I-beam.

The first sample I ran on the Versa was electrically functional afterwards, but the probe pad I deposited was too thin to make reliable contact with. (It was also an XC2C64A since I had run out of 32s). Although not a complete success, it did show that I had a working process for circuit edits.

After another batch of XC2C32As arrived, I went up to campus for another run. The signal of interest was FB2_5_FF: the flipflop for function block 2 macrocell 5. I chose this particular signal because it was the leftmost line in the second group from the left and thus easy to recognize without having to count lines in a bus.

The drilling went flawlessly, although it was a little tricky to tell whether I had gone all the way to the target wire or not in the SE view. Maybe I should start using the backscatter detector for this?

I filled in the via and made sure to put down a big pile of Pt on the probe pad so as to not repeat my last mistake.

Seen optically, the new pad was a shiny white with surface topography and a few package fragments visible through it.

At higher magnification a few slightly damaged CMP filler dots can be seen above the pad. I like to use filler metal for focusing and stigmating the ion beam at milling currents before I move to the region of interest because it's made of the same material as my target, it's something I can safely destroy, and it's everywhere - it's hard to travel a significant distance on a modern IC without bumping into at least a few pieces of filler metal.

I soldered the CPLD back onto the board and was relieved to find out that it still worked! The next step was to write some dummy code to test it out:

This is a 20-bit counter that blinks a LED at ~2 Hz from a 2048 KHz clock on the board. The second-to-last stage of the counter (so ~4 Hz) is constrained to FB2_5, the signal we're probing.

After making sure things still worked I attached the board's plastic standoffs to a 4" scrap silicon wafer with Gorilla Glue to give me a nice solid surface I could put on the prober's vacuum chuck.

Earlier today I went back to the cleanroom. After dealing with a few annoyances (for example, the prober with a wide range of Z axis travel, necessary for this test, was plugged into the electrical test station with curve tracing capability but no oscilloscope card) I landed a probe on the bond pad for VCCIO and one on ground to sanity check things. 3.3V... looks good.

Moving carefully, I lifted the probe up from the 3.3V bond pad and landed it on my newly added probe pad.

It took a little bit of tinkering with the test unit to figure out where all of the trigger settings were, but I finally saw a ~1.8V, 4 Hz squarewave. Success!

There's still a bit of tweaking needed before I can demo it to my students (among other things, the oscilloscope subsystem on the tester insists on trying to use the 100V input range, so I only have a few bits of ADC precision left to read my 1.8V waveform) but overall the attack was a success.

Once I knew what all of the wires in the ZIA did, the next step was to plan an attack to read signals out.

I decapped an XC2C32A with concentrated sulfuric acid and soldered it to my dev board to verify that it was alive and kicking.

|

| Simple CR-II dev board with integrated FTDI USB-JTAG |

I figured that all of the exposed packaging would charge, so I'd need to coat the sample with something. I normally used sputtered Pt but this is almost impossible to remove after deposition so I decided to try evaporated carbon, which can be removed nicely with oxygen plasma among other things.

I suited up for the cleanroom and met David Frey, their resident SEM/FIB expert, in front of the Zeiss 1540 FIB system. He's a former Zeiss engineer who's very protective of his "baby" and since I had never used a FIB before there was no way he was going to let me touch his, so he did all of the work while I watched. (I don't really blame him... FIB chambers are pretty cramped and it's easy to cause expensive damage by smashing into something or other. Several SEMs I've used have had one detector or another go offline for repair after a more careless user broke something.)

The first step was to mill a hole through the 900 nm or so of silicon nitride overglass using the ion beam.

|

| Newly added via, not yet filled |

Metal deposition in a FIB is basically CVD: a precursor gas is injected into the chamber near the sample and it decomposes under the influence of beam-generated secondary electrons.

Once the via was filled we put down a large (20 μm square) square pad we could hit with an electrical probe needle.

|

| Probe pad |

I repeated the experiment a week or two later, this time soldering bare stub wires to the pins so I could test by plugging the chip into a breadboard directly. This failed as well, but watching my benchtop power supply gave me a critical piece of information: while VCCINT was consuming the expected power (essentially zero), VCCIO was leaking by upwards of 20 mA.

This ruled out beam-induced damage as I had not been hitting any of the I/O circuitry with the ion beam. Assuming that the carbon evaporation process was safe (it's used all the time on fragile samples, so this seemed a reasonably safe assumption for the time being), this left only the plasma clean as the potential failure point.

I realized what was going on almost instantly: the antenna effect. The bond wire and leadframe connected to each pad in the device was acting as an antenna and coupling some of the 13.56 MHz RF energy from the plasma into the input buffers, blowing out the ESD diodes and input transistors, and leaving me with a dead chip.

This left me with two possible ways to proceed: removing the coating by chemical means (a strong oxidizer could work), or not coating at all. I decided to try the latter since there were less steps to go wrong.

Somewhat surprisingly, the cleanroom staff had very limited experience working with circuit edits - almost all of their FIB work was process metrology and failure analysis rather than rework, so they usually coated the samples.

I decided to get trained on RPI's other FIB, the brand-new FEI Versa 3D. It's operated by the materials science staff, who are a bit less of the "helicopter parent" type and were actually willing to give me hands-on training.

|

| FEI Versa 3D SEM/FIB |

After a training session I was ready to go in for an actual circuit edit.

|

| FIB control panel |

Its other nice feature is the quad-image view which lets you simultaneously view an ion beam image, an e-beam image, the IR camera inside the chamber (very helpful for not crashing your sample into a $10,000 objective lens!), and a navigation camera which displays a top-down optical view of your sample.

The nav-cam has saved me a ton of time. On RPI's older JSM-6335 FESEM, the minimum magnification is fairly high so I find myself spending several minutes moving my sample around the chamber half-blind trying to get it under the beam. With the Versa's nav-cam I'm able to set up things right the first time.

I brought up both of the beams on the aluminum sample mounting stub, then blanked them to try a new idea: Move around the sample blind, using the nav-cam only, then take single images in freeze-frame mode with one beam or the other. By reducing the total energy delivered to the sample I hoped to minimize charging.

This strategy was a complete success, I had some (not too severe) charging from the e-beam but almost no visible charging in the I-beam.

The first sample I ran on the Versa was electrically functional afterwards, but the probe pad I deposited was too thin to make reliable contact with. (It was also an XC2C64A since I had run out of 32s). Although not a complete success, it did show that I had a working process for circuit edits.

After another batch of XC2C32As arrived, I went up to campus for another run. The signal of interest was FB2_5_FF: the flipflop for function block 2 macrocell 5. I chose this particular signal because it was the leftmost line in the second group from the left and thus easy to recognize without having to count lines in a bus.

The drilling went flawlessly, although it was a little tricky to tell whether I had gone all the way to the target wire or not in the SE view. Maybe I should start using the backscatter detector for this?

|

| Via after drilling before backfill |

|

| The final probe pad, SEM image |

|

| Probe pad at low mag, optical image |

|

| Probe pad at higher magnification, optical image. Note damaged CMP filler above pad. |

`timescale 1ns / 1ps module test(clk_2048khz, led); //Clock input (* LOC = "P1" *) (* IOSTANDARD = "LVCMOS33" *) input wire clk_2048khz; //LED out (* LOC = "P38" *) (* IOSTANDARD = "LVCMOS33" *) output reg led = 0; //Don't care where this is placed reg[17:0] count = 0; always @(posedge clk_2048khz) count <= count + 1; //Probe-able signal on FB2_5 FF at 2x the LED blink rate (* LOC = "FB2_5" *) reg toggle_pending = 0; always @(posedge clk_2048khz) begin if(count == 0) toggle_pending <= !toggle_pending; end //Blink the LED always @(posedge clk_2048khz) begin if(toggle_pending && (count == 0)) led <= !led; end endmodule

This is a 20-bit counter that blinks a LED at ~2 Hz from a 2048 KHz clock on the board. The second-to-last stage of the counter (so ~4 Hz) is constrained to FB2_5, the signal we're probing.

After making sure things still worked I attached the board's plastic standoffs to a 4" scrap silicon wafer with Gorilla Glue to give me a nice solid surface I could put on the prober's vacuum chuck.

|

| Test board on 4" wafer |

Moving carefully, I lifted the probe up from the 3.3V bond pad and landed it on my newly added probe pad.

|

| Landing a probe on my pad. Note speck of dirt and bent tip left by previous user. Maybe he poked himself mounting the probe? |

|

| Waveform sniffed from my probe pad |

Getting my feet wet with invasive attacks, part 1: Target recon

This is part 1 of a 2-part series. Part 2, The Attack, is here.

One of the reasons I've gone a bit dark lately is that running CSCI 6974, RPI's experimental hardware reverse engineering class, has been eating up a lot of my time.

I wanted to make the final lab for the course a nice climax to the semester and do something that would show off the kinds of things that are possible if you have the right gear, so it had to be impressive and technically challenging. The obvious choice was a FIB circuit edit combined with invasive microprobing.

After slaving away for quite a while (this was started back in January or so) I've managed to get something ready to show off :) The work described here will be demonstrated in front of my students next week as part of the fourth lab for the class.

The first step was to pick a target. I was interested in the Xilinx XC2C32A for several reasons and was already using other parts of the chip as a teaching subject for the class. It's a pure-digital CMOS CPLD (no analog sense amps and a fairly regular structure) made on a relatively modern process (180 nm 4-metal UMC) but not so modern as to be insanely hard to work with. It was also quite cheap ($1.25 a pop for the slowest speed grade in VQG44 package on DigiKey) so I could afford to kill plenty of them during testing

The next step was to decap a few, label interesting pins, and draw up a die floorplan. Here's a view of the die at the implant layer after Dash etch; P-type doping shows up as brown. (John did all of the staining work and got great results. Thanks!)

The bottom half of the die is support infrastructure with EEPROM banks for storing the configuration bitstream toward the center and JTAG/configuration stuff in a U-shape below and to either side of the memory array. (The EEPROM is mislabeled "flash" in this image because I originally assumed it was 1T NOR flash. Higher magnification imaging later showed this to be wrong; the bit cells are 2T EEPROM.)

The top half of the die is the actual programmable logic, laid out in a "butterfly" structure. The center spine is the ZIA (global routing, also referred to as the AIM in some datasheets), which takes signals from the 32 macrocell flipflops and 33 GPIO pins and routes them into the function blocks. To either side of the spine are the two FBs, which consist of an 80 x 56 AND array (simplifying a bit... the actual structure is more like 2 blocks x 20 rows x 2 interleaved cells x 56 columns), a 56 x 16 OR array, and 16 macrocells.

I wanted some interesting data to show my students so there were two obvious choices. First, I could try to defeat the code protection somehow and read bitstreams out of a locked device via JTAG. Second, I could try to read internal device state at run time. The second seemed a bit easier so I decided to run with it (although defeating the lock bits is still on my longer-term TODO.)

The obvious target for probing internal runtime state is the ZIA, since all GPIO inputs and flipflop states have to go through here. Unfortunately, it's almost completely undocumented! Here's the sum total of what DS090 has to say about it (pages 5-6):

The basic ZIA structure was pretty obvious from inspection of the implant layer: 20 identical copies of the same logic. This suggested that each row was responsible for feeding two signals left and two right.

SEM imaging of the implant layer showed the basic structure to be largely, but not entirely, symmetric about the left-right axis. At the far outside a few cells of the PLA AND array can be seen. Moving toward the center is what appears to be a 3-stage buffer, presumably for driving the row's output into the PLA. The actual routing logic is at center.

The row appeared entirely symmetric top-to-bottom so I focused my future analysis on the upper half.

Looking at the top metal layer revealed the expected 65 signals.

The signals were grouped into six groups with 11, 11, 11, 11, 11, and 10 signals in them. This led me to suspect that there was some kind of six-fold structure to the underlying circuitry, a suspicion which was later proven correct.

Inspection of the configuration EEPROM for the ZIA showed it to be 16 bits wide by 48 rows high.

Since the global configuration area in the middle of the chip was 8 rows high this suggested that each of the 40 remaining EEPROM rows configured the top or bottom half of a ZIA row.

Of the 16 bits in each row, 8 bits presumably controlled the left-hand output and 8 controlled the right. This didn't make a lot of sense at first: dense binary coding would require only 7 bits for 65 channels and one-hot coding would need 65 bits.

Reading documentation for related device families sometimes helps to shed some light on how a part was designed, so I took a look at some of the whitepapers for the older 350 nm CoolRunner XPLA3 series. They went into some detail on how full crossbar routing was wasteful of chip area and often not necessary to get sufficient routability. You don't need to be able to generate every 40! permutations of a given subset of signals as long as you can route every signal somehow. Instead, the XPLA3's designers connected only a handful of the inputs to each row and varied the input selection for each row so as to allow almost every possible subset to be selected somehow.

This suggested a 2-level hierarchy to the ZIA mux. Instead of being a 65:1 mux it was a 65:N hard-wired mux followed by a N:1 programmable mux feeding left and another N:1 feeding right. 6 seemed to be a reasonable guess for N, given the six groups of wires on metal 4.

This hypothesis was quickly confirmed by looking at M3 and M3-M4 vias: Each row had six short wires on M3, one under each of the six groups of wires in the bus. Each of these short lines was connected by one via to one of the bus lines on M4. The via pattern varied from row to row as expected.

I extracted the full via pattern by copying a tracing of M4 over the M3 image and using the power vias running down the left side as registration marks. (Pro tip: Using a high accelerating voltage, like 20 kV, in a SEM gives great results on aluminum processes with tungsten via plugs. You get backscatters from vias through the metal layer that you can use for aligning image stacks.) A few of the rows are shown above.

At this point I felt I understood most of the structure so the next step was full circuit extraction! I had John CMP a die down to each layer and send to me for high-res imaging in the SEM.

The output buffers were fairly easy. As I expected they were just a 3-stage inverter cascade.

Nothing interesting was present on any of the upper layers above here, just power distribution.

The one surprising thing about the output buffer was that the NMOS on the third stage had a substantially wider channel than the PMOS. This is probably something to do with optimizing output rise/fall times.

Looking at the actual mux logic showed that it was mostly tiles of the same basic pattern (a 6T SRAM cell, a 2-input NOR gate, and a large multi-fingered NMOS pass transistor) except for the far left side.

The same SRAM-feeding-NOR2 structure is seen, but this time the output is a small NMOS or PMOS pass transistor.

After tracing M1, it became obvious what was going on.

The upper and lower halves control the outputs to function blocks 1 and 2 respectively. The two SRAM bits allow each output (labeled MUXOUT_FBx) to be pulled high, low, or float. A global reset line of some sort, labeled OGATE, is used to gate all logic in the entire ZIA (and presumably the rest of the chip); when OGATE is high the SRAM bits are ignored and the output is forced high.

Here's what it looks like in schematic:

In the schematics I drew the NOR2v0x1 cell as its de Morgan dual (AND with inverted inputs) since this seemed to make more sense in the context of the circuit: the output is turned on when the active-low input is low and OGATE is turned off.

It's interesting to note that while almost all of the config bits in the circuit are active-low, PULLUP is active-high. This is presumably done to allow the all-ones state (a blank EEPROM array) to put the muxes in a well-defined state rather than floating.

Turning our attention to the rest of the mux array shows a 6:1 one-hot-coded mux made from NMOS pass transistors. This, combined with the 2 bits needed for the pull-high/pull-low module, adds up to the expected 8. The same basic pattern shown below is tiled three times.

(Sorry for the misalignment of the contact layer, this was a quick tracing and as long as I was able to make sense of the circuit I didn't bother polishing it up to look pretty!)

The resulting schematic:

M2 was used for some short-distance routing as well as OGATE, power/ground busing, and the SRAM bit lines.

M3 was used for OGATE, power busing, SRAM word lines, the mask-programmed muxes, and the tri-state bus within the final mux.

And finally, M4. I never found out what the leftmost power line went to, it didn't appear to be VCCINT or ground but was obviously power distribution. There's no reason for VCCIO to be running down the middle of the array so maybe VCCAUX? Reversing the global config logic may provide the answer.

A bit of trial and error poking bits in bitstreams was sufficient to determine the ordering of signals. From right to left we have FB1's GPIO pins, the input-only pin, FB2's GPIO pins, then FB1's flipflops and finally FB2's flipflops.

Now that I had good intel on the target, it was time to plan the strike!

Part 2, The Attack, is here.

One of the reasons I've gone a bit dark lately is that running CSCI 6974, RPI's experimental hardware reverse engineering class, has been eating up a lot of my time.

I wanted to make the final lab for the course a nice climax to the semester and do something that would show off the kinds of things that are possible if you have the right gear, so it had to be impressive and technically challenging. The obvious choice was a FIB circuit edit combined with invasive microprobing.

After slaving away for quite a while (this was started back in January or so) I've managed to get something ready to show off :) The work described here will be demonstrated in front of my students next week as part of the fourth lab for the class.

The first step was to pick a target. I was interested in the Xilinx XC2C32A for several reasons and was already using other parts of the chip as a teaching subject for the class. It's a pure-digital CMOS CPLD (no analog sense amps and a fairly regular structure) made on a relatively modern process (180 nm 4-metal UMC) but not so modern as to be insanely hard to work with. It was also quite cheap ($1.25 a pop for the slowest speed grade in VQG44 package on DigiKey) so I could afford to kill plenty of them during testing

The next step was to decap a few, label interesting pins, and draw up a die floorplan. Here's a view of the die at the implant layer after Dash etch; P-type doping shows up as brown. (John did all of the staining work and got great results. Thanks!)

|

| XC2C32A die floorplan after Dash etch |

The top half of the die is the actual programmable logic, laid out in a "butterfly" structure. The center spine is the ZIA (global routing, also referred to as the AIM in some datasheets), which takes signals from the 32 macrocell flipflops and 33 GPIO pins and routes them into the function blocks. To either side of the spine are the two FBs, which consist of an 80 x 56 AND array (simplifying a bit... the actual structure is more like 2 blocks x 20 rows x 2 interleaved cells x 56 columns), a 56 x 16 OR array, and 16 macrocells.

I wanted some interesting data to show my students so there were two obvious choices. First, I could try to defeat the code protection somehow and read bitstreams out of a locked device via JTAG. Second, I could try to read internal device state at run time. The second seemed a bit easier so I decided to run with it (although defeating the lock bits is still on my longer-term TODO.)

The obvious target for probing internal runtime state is the ZIA, since all GPIO inputs and flipflop states have to go through here. Unfortunately, it's almost completely undocumented! Here's the sum total of what DS090 has to say about it (pages 5-6):

The Advanced Interconnect Matrix is a highly connected low power rapid switch. The AIM is directed by the software to deliver up to a set of 40 signals to each FB for the creation of logic. Results from all FB macrocells, as well as, all pin inputs circulate back through the AIM for additional connection available to all other FBs as dictated by the design software. The AIM minimizes both propagation delay and power as it makes attachments to the various FBs.Thanks for the tidbit, Xilinx, but this really isn't gonna cut it. I need more info!

The basic ZIA structure was pretty obvious from inspection of the implant layer: 20 identical copies of the same logic. This suggested that each row was responsible for feeding two signals left and two right.

SEM imaging of the implant layer showed the basic structure to be largely, but not entirely, symmetric about the left-right axis. At the far outside a few cells of the PLA AND array can be seen. Moving toward the center is what appears to be a 3-stage buffer, presumably for driving the row's output into the PLA. The actual routing logic is at center.

The row appeared entirely symmetric top-to-bottom so I focused my future analysis on the upper half.

|

| Single row of the ZIA seen at the implant layer after Dash etch. Light gray is P-type doping, medium gray is N-type doping, dark gray is STI trenches. |

|

| Single row of the ZIA seen on metal 4 |

Inspection of the configuration EEPROM for the ZIA showed it to be 16 bits wide by 48 rows high.

|

| ZIA configuration EEPROM (top few rows) |

Of the 16 bits in each row, 8 bits presumably controlled the left-hand output and 8 controlled the right. This didn't make a lot of sense at first: dense binary coding would require only 7 bits for 65 channels and one-hot coding would need 65 bits.

Reading documentation for related device families sometimes helps to shed some light on how a part was designed, so I took a look at some of the whitepapers for the older 350 nm CoolRunner XPLA3 series. They went into some detail on how full crossbar routing was wasteful of chip area and often not necessary to get sufficient routability. You don't need to be able to generate every 40! permutations of a given subset of signals as long as you can route every signal somehow. Instead, the XPLA3's designers connected only a handful of the inputs to each row and varied the input selection for each row so as to allow almost every possible subset to be selected somehow.

This suggested a 2-level hierarchy to the ZIA mux. Instead of being a 65:1 mux it was a 65:N hard-wired mux followed by a N:1 programmable mux feeding left and another N:1 feeding right. 6 seemed to be a reasonable guess for N, given the six groups of wires on metal 4.

|

| ZIA mux structure |

|

| ZIA M3-M4 vias |

I extracted the full via pattern by copying a tracing of M4 over the M3 image and using the power vias running down the left side as registration marks. (Pro tip: Using a high accelerating voltage, like 20 kV, in a SEM gives great results on aluminum processes with tungsten via plugs. You get backscatters from vias through the metal layer that you can use for aligning image stacks.) A few of the rows are shown above.

At this point I felt I understood most of the structure so the next step was full circuit extraction! I had John CMP a die down to each layer and send to me for high-res imaging in the SEM.

The output buffers were fairly easy. As I expected they were just a 3-stage inverter cascade.

|

| Output buffer poly/diffusion/contact tracing |

|

| Output buffer M1 tracing |

|

| Output buffer gate-level schematic |

|

| Individual cell schematics |

The one surprising thing about the output buffer was that the NMOS on the third stage had a substantially wider channel than the PMOS. This is probably something to do with optimizing output rise/fall times.

Looking at the actual mux logic showed that it was mostly tiles of the same basic pattern (a 6T SRAM cell, a 2-input NOR gate, and a large multi-fingered NMOS pass transistor) except for the far left side.

|

| Gate-level layout of mux area |

|

| Left side of mux area, gate-level layout |

After tracing M1, it became obvious what was going on.

|

| Left side of mux area, M1 |

The upper and lower halves control the outputs to function blocks 1 and 2 respectively. The two SRAM bits allow each output (labeled MUXOUT_FBx) to be pulled high, low, or float. A global reset line of some sort, labeled OGATE, is used to gate all logic in the entire ZIA (and presumably the rest of the chip); when OGATE is high the SRAM bits are ignored and the output is forced high.

Here's what it looks like in schematic:

|

| Gate-level schematics of pullup/pulldown logic |

|

| Cell schematics |

It's interesting to note that while almost all of the config bits in the circuit are active-low, PULLUP is active-high. This is presumably done to allow the all-ones state (a blank EEPROM array) to put the muxes in a well-defined state rather than floating.

Turning our attention to the rest of the mux array shows a 6:1 one-hot-coded mux made from NMOS pass transistors. This, combined with the 2 bits needed for the pull-high/pull-low module, adds up to the expected 8. The same basic pattern shown below is tiled three times.

|

| Basic mux tile, poly/implant |

|

| Basic mux tile, M1 |

The resulting schematic:

|

| Schematic of muxes |

M2 was used for some short-distance routing as well as OGATE, power/ground busing, and the SRAM bit lines.

|

| M2 and M2-M3 vias |

M3 was used for OGATE, power busing, SRAM word lines, the mask-programmed muxes, and the tri-state bus within the final mux.

|

| M3 and M3-M4 vias |

And finally, M4. I never found out what the leftmost power line went to, it didn't appear to be VCCINT or ground but was obviously power distribution. There's no reason for VCCIO to be running down the middle of the array so maybe VCCAUX? Reversing the global config logic may provide the answer.

|

| M4 |

Now that I had good intel on the target, it was time to plan the strike!

Part 2, The Attack, is here.

Monday, March 24, 2014

Microchip PIC32MZ process vs PIC32MX

Those of you keeping an eye on the MIPS microcontroller world have probably heard of Microchip's PIC32 series parts: MIPS32 CPU cores licensed from MIPS Technologies (bought by Imagination Technologies recently) paired with peripherals designed in-house by Microchip.

Although they're sold under the PIC brand name they have very little in common with the 8/16 bit PIC MCUs. They're fully pipelined processors with quite a bit of horsepower.

The PIC32MX family was the first to be introduced, back in 2009 or so. They're a MIPS M4K core at up to 80 MHz and max out at 128 KB of SRAM and 512 KB of NOR flash plus a fairly standard set of peripherals.

Somewhat disappointingly, the PIC32MX MMU is fixed mapping and there is no external bus interface. Although there is support for user/kernel privilege separation, all userspace code shares one address space. Another minor annoyance is that all PIC32MX parts run from a fixed 1.8V on-die LDO which normally cannot (the 300 series is an exception) be disabled or bypassed to run from an external supply.

The PIC32MZ series is just coming out now. They're so new, in fact that they show as "future product" on Microchip's website and you can only buy them on dev boards, although I'm told by around Q3-Q4 of this year they'll be reaching distributors. They fix a lot of the complaints I have with PIC32MX and add a hefty dose of speed: 200 MHz max CPU clock and an on-die L1 cache.

On-chip memory in the PIC32MZ is increased to up to 512 KB of SRAM and a whopping 2 MB of flash in the largest part. The new CPU core has a fully programmable MMU and support for an external bus interface capable of addressing up to 16MB of off-chip address space.

I'm a hacker at heart, not just a developer, so I knew the minute I got one of these things I'd have to tear it down and see what made it tick. I looked around for a bit, found a $25 processor module on Digikey, and picked it up.

The board was pretty spartan, which was fine by me as I only wanted the chip.

Less than an hour after the package had arrived, I had the chip desoldered and simmering away in a beaker of sulfuric acid. I had done a PIC32MX340F512H a few days previously to provide comparison shots.

Without further ado, here's the top metal shots:

These photos aren't to scale, the MZ is huge (about 31.9 mm2). By comparison the MX is around 20.

From an initial impression, we can see that although both run at the same core voltage (1.8V) the MZ is definitely a new, significantly smaller fab process. While the top layer of the MX is fine-pitch signal routing, the top layer of the MZ is (except in a few blocks which appear to contain analog circuitry) completely filled with power distribution routing.

Thick power distribution wiring on the top layer is a hallmark of deep-submicron processes, 130 nm and below. Most 180 nm or larger devices have at least some signal routing on the top layer.

Looking at the mask revision markings gives a good hint as to the layer count and stack-up.

The MZ appears to be one thick aluminum layer and five thin copper layers for a total of six, while the MX is four layers and probably all aluminum.

Enough with the top layer... time to get down! Both samples were etched with HF until all metal and poly was removed.

The first area of interest was the flash.

Both arrays appear to be the same standard NOR structure, although the MZ's array is quite a bit denser: the bit cell pitch is 643 x 270 nm (0.173 μm2/bit) while the MX's is 1015 x 676 nm (0.686 μm2/bit). The 3.96x density increase suggests a roughly 2x process shrink.

The white cylinders littering the MX die are via plugs, most likely tungsten, left over after the HF etch. The MZ appears to use a copper damascene process without via plugs, although since no cross section was performed details of layer thicknesses etc are unavailable.

The next target was the SRAM.

Here we start to see significant differences. The MX uses a fairly textbook 6T "doughnut + H" SRAM structure while the MZ uses a more modern lithography-optimized pattern made of all straight lines with no angles, which is easier to etch. This kind of bit cell is common in leading-edge processes but this is the first time I've seen it in a commodity MCU.

Cell pitch for the MZ is 1345 x 747 nm (1.00 μm2/bit) while the MX is 1895 x 2550 nm (4.83 μm2/bit). This is a 4.83x increase in density.

The last area of interest was the standard cell array for the CPU.

Channel length was measured at 125-130 nm for the MZ and 250-260 nm for the MX.

Both devices also had a significant number of dummy cells in the gate array, suggesting that the designs were routing-constrained.

In conclusion, the PIC32MZ is a significantly more powerful 130 nm upgrade to the slower 250 nm PIC32MX family. If Microchip fixes most of the silicon bugs before they launch I'll definitely pick up a few and build some stuff with them.

I wasn't able to positively identify the fab either device was made on however the fill patterns and power distribution structure on the MZ are very similar of the TI AM1707 which is fabricated by TSMC so they're my first guess.

For more info and die pics check out the SiliconPr0n pages for the two chips:

Although they're sold under the PIC brand name they have very little in common with the 8/16 bit PIC MCUs. They're fully pipelined processors with quite a bit of horsepower.

The PIC32MX family was the first to be introduced, back in 2009 or so. They're a MIPS M4K core at up to 80 MHz and max out at 128 KB of SRAM and 512 KB of NOR flash plus a fairly standard set of peripherals.

|

| PIC32MX microcontroller |

Somewhat disappointingly, the PIC32MX MMU is fixed mapping and there is no external bus interface. Although there is support for user/kernel privilege separation, all userspace code shares one address space. Another minor annoyance is that all PIC32MX parts run from a fixed 1.8V on-die LDO which normally cannot (the 300 series is an exception) be disabled or bypassed to run from an external supply.

The PIC32MZ series is just coming out now. They're so new, in fact that they show as "future product" on Microchip's website and you can only buy them on dev boards, although I'm told by around Q3-Q4 of this year they'll be reaching distributors. They fix a lot of the complaints I have with PIC32MX and add a hefty dose of speed: 200 MHz max CPU clock and an on-die L1 cache.

|

| PIC32MZ microcontroller |

On-chip memory in the PIC32MZ is increased to up to 512 KB of SRAM and a whopping 2 MB of flash in the largest part. The new CPU core has a fully programmable MMU and support for an external bus interface capable of addressing up to 16MB of off-chip address space.

I'm a hacker at heart, not just a developer, so I knew the minute I got one of these things I'd have to tear it down and see what made it tick. I looked around for a bit, found a $25 processor module on Digikey, and picked it up.

The board was pretty spartan, which was fine by me as I only wanted the chip.

|

| PIC32MZ processor module |

Without further ado, here's the top metal shots:

|

| PIC32MX340F512H |

|

| PIC32MZ2048ECH |

From an initial impression, we can see that although both run at the same core voltage (1.8V) the MZ is definitely a new, significantly smaller fab process. While the top layer of the MX is fine-pitch signal routing, the top layer of the MZ is (except in a few blocks which appear to contain analog circuitry) completely filled with power distribution routing.

|

| Top layer closeups of MZ (left), MX (right), same scale |

Thick power distribution wiring on the top layer is a hallmark of deep-submicron processes, 130 nm and below. Most 180 nm or larger devices have at least some signal routing on the top layer.

Looking at the mask revision markings gives a good hint as to the layer count and stack-up.

|

| Mask rev markings on MZ (left), MX (right), same scale |

Enough with the top layer... time to get down! Both samples were etched with HF until all metal and poly was removed.

The first area of interest was the flash.

|

| NOR flash on MZ (left), MX (right), different scales |

The white cylinders littering the MX die are via plugs, most likely tungsten, left over after the HF etch. The MZ appears to use a copper damascene process without via plugs, although since no cross section was performed details of layer thicknesses etc are unavailable.

The next target was the SRAM.

|

| 6T SRAM on MZ (left), MX (right), different scales |

Cell pitch for the MZ is 1345 x 747 nm (1.00 μm2/bit) while the MX is 1895 x 2550 nm (4.83 μm2/bit). This is a 4.83x increase in density.

The last area of interest was the standard cell array for the CPU.

|

| Closeup of standard cells on MZ (left), MX (right), different scales |

Both devices also had a significant number of dummy cells in the gate array, suggesting that the designs were routing-constrained.

|

| Dummy cells in MZ |

|

| Dummy cells in MX |

In conclusion, the PIC32MZ is a significantly more powerful 130 nm upgrade to the slower 250 nm PIC32MX family. If Microchip fixes most of the silicon bugs before they launch I'll definitely pick up a few and build some stuff with them.

I wasn't able to positively identify the fab either device was made on however the fill patterns and power distribution structure on the MZ are very similar of the TI AM1707 which is fabricated by TSMC so they're my first guess.

For more info and die pics check out the SiliconPr0n pages for the two chips:

Tuesday, February 4, 2014

Process overview: UMC 180nm eNVM

I've been reverse engineering a programmable logic device (Xilinx XC2C32A) made on UMC's 180nm eNVM process for the last few months and have been a little light on blog posts. I'm a big fan of the process writeups Chipworks does so I figured I'd try my hand at one ;)

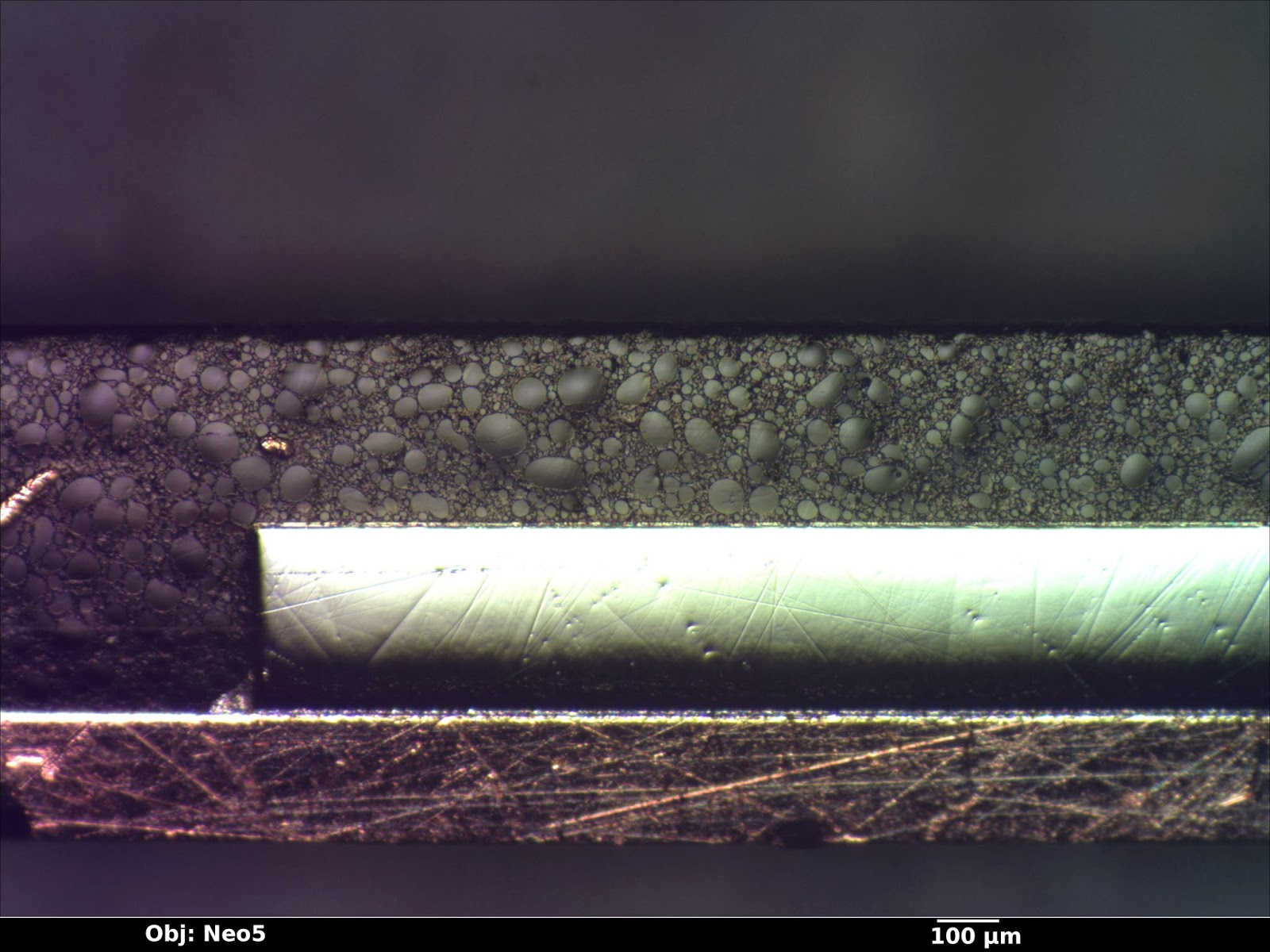

The target devices were packaged in a 32-pin QFN. The first part of the analysis was to sanding the entire package down to the middle of the device and polishing with diamond paste to get a quick overview of the die and packaging stack. (Shops with big budgets normally use X-ray systems for this.) There were a few scratches in the section from sanding, but since the closeups were going to be done on another die it wasn't necessary to polish them out.

Total die thickness including BEOL was just over 300 μm. From the optical image, four layers of metal can be seen. The whitish color hinted that it was aluminum rather than copper, but there's no sense jumping to conclusions since the next die was about to hit the SEM.

A second specimen was depackaged using sulfuric acid, sputtered in platinum to reduce charging, and sectioned using a gallium ion FIB at a slight angle to the east-west routing.

From this image, it is easy to get some initial impressions of the process:

M1 to M3 have pretty much identical stackups except for slight thickness differences: 100nm of Ti-based adhesion/barrier layer, 400-550 nm of aluminum conductor, then another barrier layer of similar composition. M4 is slightly thicker (850 nm aluminum) and the same barrier thickness.

The first overglass layer is the same material (silicon dioxide) as ILD; thickness ranges from slightly below the top of M4 to to 630 nm above the top. The second overglass layer has a slightly higher backscatter yield (EDIT: confirmed by EDS to be silicon nitride) and is about 945 nm thick.

M1-3 pitch is just over 600 nm, while the smallest observed M4 pitch is 1 μm.

A closer view of M3 shows the barrier metals in more detail. The barrier is a bit over 100 nm thick normally but thins to about 45 nm underneath upward-facing vias, suggesting that the ILD etch for drilling via holes also damages the barrier material. A small amount (around 30 nm) of sagging is present over the top of downward-facing vias.

Via sidewalls are coated with barrier metal as well, however it is significantly thinner (20 nm vs 100) than the metal layer barrier. The vias themselves are polycrystalline tungsten. Grain structure is clearly visible in the secondary electron image below.

(Note: The structure at left of the image is the edge of the FIB trench and stray material deposited by the ion beam and is not part of the actual device. The lower via is at a slight angle to the section so it was not entirely sliced open.)

The metal aspect ratio ranges from 3:1 on M1 to 1.5:1 on M4.

Now for the most interesting area - the transistors themselves!

The cross section was taken down the center of the CPLD's PLA OR array between two rows of 6T SRAM cells. Two PMOS transistors from each of two SRAM cells are visible in the closeup below.

Contacted gate pitch is 920 nm, for total cell width (including the 1180 nm of STI trench) of 2.9 μm. Plan view imaging shows total cell dimensions to be 2.9 x 3.3 μm or 9.5 μm2. This is a bit large for the 180 nm node and probably reflects the need to leave space on M1 and M2 for routing SRAM cell output to the programmable logic array.

Some variability in etch depth and sidewall slope is clearly visible on M1.

The polysilicon layer was hard to see in this view but is probably around 50 nm thick, topped by about 135 nm of cobalt silicide. (Gate oxide thickness isn't visible under SEM at the 180 nm node and I haven't yet had time to prepare a TEM sample.)

Source/drain contacts are made with a 70 nm thick cobalt silicide layer. All vias in the entire process appear to be about the same size (300 nm diameter) however the silicide contact pads are larger (465 nm).

Gate length is almost exactly 180 nm - measurement of the SEM image shows 175 nm +/- 12 nm.

Overall, the process seems fairly typical except for its use of aluminum for interconnect. It was a fun analysis and if I have time I may try to do a TEM cross section of both PMOS and NMOS transistors in the future. My main interest in the chip is netlist extraction, though, so this isn't a high priority.

I may also do a second post on the Flash portion of the chip.

EDIT: Decided to post a plan view SEM image of the flash array active area. This is after Dash etch; P-type areas have oxide grown over them. Poly has been stripped. The left-hand flash area is ten bits wide and stores configuration for function block 2's macrocells plus a "valid" bit. The right-hand area stores configuration for FB2's PLA (including both the AND and OR arrays, but not global routing).

Finally, I would like to give special thanks to David Frey at the RPI cleanroom for assistance with the FIB cross section.

The target devices were packaged in a 32-pin QFN. The first part of the analysis was to sanding the entire package down to the middle of the device and polishing with diamond paste to get a quick overview of the die and packaging stack. (Shops with big budgets normally use X-ray systems for this.) There were a few scratches in the section from sanding, but since the closeups were going to be done on another die it wasn't necessary to polish them out.

|

| Packaged device cross section |

|

| Packaged device cross section |

Total die thickness including BEOL was just over 300 μm. From the optical image, four layers of metal can be seen. The whitish color hinted that it was aluminum rather than copper, but there's no sense jumping to conclusions since the next die was about to hit the SEM.

A second specimen was depackaged using sulfuric acid, sputtered in platinum to reduce charging, and sectioned using a gallium ion FIB at a slight angle to the east-west routing.

|

| FIB cross section of metal stack |

- The overglass consists of two layers of slightly different compositions, possibly an oxide-nitride stack.

- The process is planarized up to metal 4, but not including overglass.

- Metal has an adhesion/barrier layer at the top and bottom and not the sides, and is wider at the bottom than the top. This rules out damascene patterning and suggests that the metal layers are dry-etched aluminum.

- Silicide layers are visible above the polysilicon gates and at the source/drain implants.

- Vias have a much higher atomic number than the metal layers, probably tungsten.

- Stacked vias are allowed and used frequently.

- Well isolation is STI.

M1 to M3 have pretty much identical stackups except for slight thickness differences: 100nm of Ti-based adhesion/barrier layer, 400-550 nm of aluminum conductor, then another barrier layer of similar composition. M4 is slightly thicker (850 nm aluminum) and the same barrier thickness.

The first overglass layer is the same material (silicon dioxide) as ILD; thickness ranges from slightly below the top of M4 to to 630 nm above the top. The second overglass layer has a slightly higher backscatter yield (EDIT: confirmed by EDS to be silicon nitride) and is about 945 nm thick.

M1-3 pitch is just over 600 nm, while the smallest observed M4 pitch is 1 μm.

|

| EDS spectrum of wire on M4 |

A closer view of M3 shows the barrier metals in more detail. The barrier is a bit over 100 nm thick normally but thins to about 45 nm underneath upward-facing vias, suggesting that the ILD etch for drilling via holes also damages the barrier material. A small amount (around 30 nm) of sagging is present over the top of downward-facing vias.

Via sidewalls are coated with barrier metal as well, however it is significantly thinner (20 nm vs 100) than the metal layer barrier. The vias themselves are polycrystalline tungsten. Grain structure is clearly visible in the secondary electron image below.

(Note: The structure at left of the image is the edge of the FIB trench and stray material deposited by the ion beam and is not part of the actual device. The lower via is at a slight angle to the section so it was not entirely sliced open.)

|

| M3 with upward/downward vias in cross section. |

|

| EDS spectrum of M1-M2 via area |

Now for the most interesting area - the transistors themselves!

The cross section was taken down the center of the CPLD's PLA OR array between two rows of 6T SRAM cells. Two PMOS transistors from each of two SRAM cells are visible in the closeup below.

Contacted gate pitch is 920 nm, for total cell width (including the 1180 nm of STI trench) of 2.9 μm. Plan view imaging shows total cell dimensions to be 2.9 x 3.3 μm or 9.5 μm2. This is a bit large for the 180 nm node and probably reflects the need to leave space on M1 and M2 for routing SRAM cell output to the programmable logic array.

|

| SRAM cell structure and PLA AND array after metal/poly removal and Dash etch. (P-type implants are raised due to oxide growth from stain.) |

Some variability in etch depth and sidewall slope is clearly visible on M1.

The polysilicon layer was hard to see in this view but is probably around 50 nm thick, topped by about 135 nm of cobalt silicide. (Gate oxide thickness isn't visible under SEM at the 180 nm node and I haven't yet had time to prepare a TEM sample.)

Source/drain contacts are made with a 70 nm thick cobalt silicide layer. All vias in the entire process appear to be about the same size (300 nm diameter) however the silicide contact pads are larger (465 nm).

Gate length is almost exactly 180 nm - measurement of the SEM image shows 175 nm +/- 12 nm.

|

| Active area contacts and PMOS transistors |

|

| EDS spectrum of active-M1 contact |

|

| Closeup of PLA AND array after Dash etch showing PMOS and NMOS channels |

Overall, the process seems fairly typical except for its use of aluminum for interconnect. It was a fun analysis and if I have time I may try to do a TEM cross section of both PMOS and NMOS transistors in the future. My main interest in the chip is netlist extraction, though, so this isn't a high priority.

I may also do a second post on the Flash portion of the chip.